I watched a recent hackathon where a team of developers tried to introduce AI agents into their workflow. It started with good intentions - “let’s automate the code review.” Everyone was excited. The idea was simple: let the AI handle the repetitive work so the team could focus on bigger problems. Within a few hours, they had a code review agent running. It was fast, smart, and surprisingly thorough. Encouraged by that success, they added another agent to run tests automatically after each pull request. Then another to handle deployments. Each agent worked perfectly on its own. But together? Things quickly fell apart. The deployment agent pushed a build before the testing agent finished validating it. The monitoring agent thought it saw live traffic and started scaling up infrastructure. The code review agent flagged code that had already changed. No one could tell what had gone wrong. The logs looked fine. Every agent had done exactly what it was told. But collectively, they had created a mess. That’s when it hit me: this wasn’t just engineering chaos anymore. This was Agentic Chaos - a new kind of disorder created not by humans, but by machines trying to help.Documentation Index

Fetch the complete documentation index at: https://docs.overcut.ai/llms.txt

Use this file to discover all available pages before exploring further.

The Promise and the Problem

AI has become part of the developer’s toolkit faster than anything we’ve ever seen. It writes code, generates tests, documents APIs, even deploys applications. On paper, it should make everything easier. But it doesn’t - at least, not yet. Most teams are adding AI into their pipelines without a real strategy. Each group builds its own automation, connects it to their workflow, and assumes it’ll play nicely with everything else. For a while, it does. Then one day, the system starts fighting itself. Agents push changes without knowing what others are doing. Pipelines race ahead of validation steps. Automated processes loop infinitely because no one told the agents when to stop. It’s not malicious. It’s just uncoordinated autonomy. That’s Agentic Chaos - when your AI agents are technically correct but collectively wrong.The Hard Truth About Scaling AI

Building one AI agent is easy. Scaling many that cooperate is hard. The SDLC isn’t a straight line from code to deployment. It’s a web of dependencies, feedback loops, and moving parts. Code changes affect tests, tests affect builds, builds affect releases, and it all loops back. When agents operate without seeing that full picture, they start to act like disconnected teams - except faster and with more confidence. That’s how chaos spreads quietly and quickly. Every team we spoke to was hitting the same wall: autonomy was outpacing awareness. That’s when we decided to do something about it.Why We Built Overcut

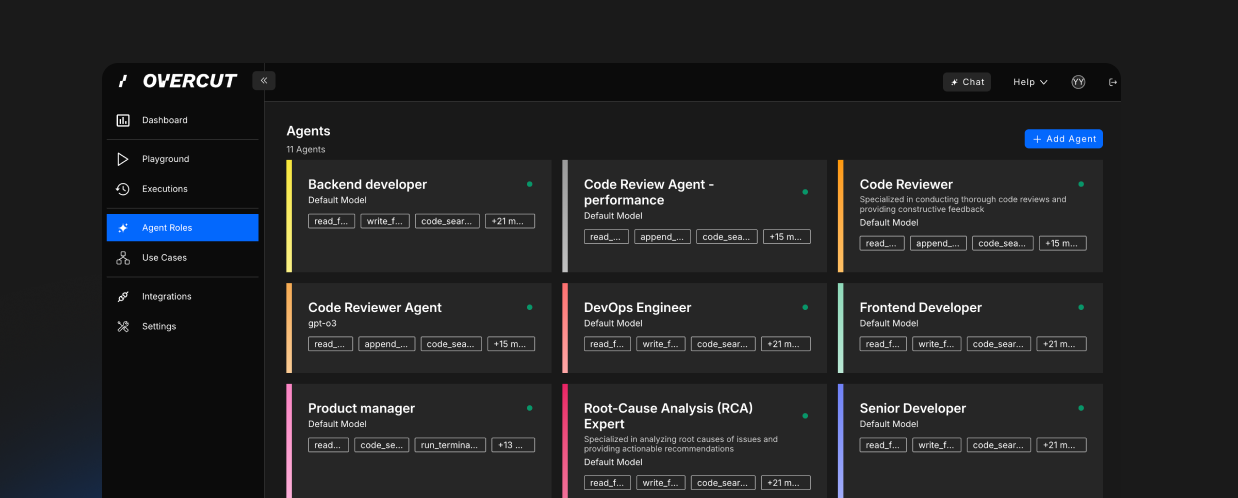

We built Overcut because we were able to forecast what was coming. Long before agentic chaos started showing up in pipelines, we could already see the pattern forming. AI was getting smarter, automation was getting faster, and developers were starting to plug agents into every part of their SDLC. But there was one problem no one was talking about: trust. We understood that there wasn’t a single tool out there that could run automations inside your SDLC - at scale - and still be trusted to take things all the way to production. Everyone was building clever agents, but no one was thinking about how they’d work together, how they’d stay aligned, or how humans would stay in control once AI started running the show. We saw that gap clearly - and we knew it would define the next generation of engineering. That’s why we built Overcut. Not as another automation layer, but as a foundation for trust. Overcut gives your engineering organization deep, rich, real-time context - connecting your code, tests, builds, deployments, and incidents into one coherent picture. It’s the shared language that lets AI agents and humans operate with full awareness of what’s happening across the system. When your testing agent understands what your deployment agent is doing, and when your monitoring agent knows which branch just merged, everything starts to sync. The chaos fades. Automation becomes collaboration. That’s the world we set out to build - one where AI in your SDLC is something you can actually trust to take to production.